In OIM 11g, the entity adapters cannot be attached to the user form. So instead you will have to re-implement the entity adapters as event handlers. I had a requirement recently to do the same. The good thing about it is you can combine multiple operations into one single event handler. In this post i am going to share the code for one pre-process and one post-process event handler.

As this is pre process event handler all you have to do is place the new attribute value in the orchestration parameter map and it will be saved in the user profile. The generateEmpId method utilizes the transaction manager api.

The snippet for post process event is mentioned below.

Note that post process events must return null as the event result as they are asynchronous event. The generate email is just a stub and is actually not implemented.

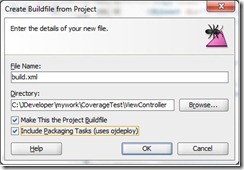

The event handler xml that you need to import into mds is mentioned below.

Now for the logging part, in the snippets, i have used adflogger implementation. All you need to do to use it to add the logger with name com.blogspot to the oim server config, you could also add a log handler to place the logging output in a separate file.

- The pre-process event handler is going to generate the user login based upon the user type.

- The post process is going to generate email id for the user if one is not entered initially.

package com.blogspot.ramannanda.oim.handlers;

import java.io.Serializable;

import java.sql.Connection;

import java.sql.ResultSet;

import java.sql.Statement;

import java.util.HashMap;

import oracle.adf.share.logging.ADFLogger;

import oracle.iam.identity.usermgmt.api.UserManagerConstants;

import oracle.iam.platform.Platform;

import oracle.iam.platform.context.ContextAware;

import oracle.iam.platform.kernel.spi.PreProcessHandler;

import oracle.iam.platform.kernel.vo.AbstractGenericOrchestration;

import oracle.iam.platform.kernel.vo.BulkEventResult;

import oracle.iam.platform.kernel.vo.BulkOrchestration;

import oracle.iam.platform.kernel.vo.EventResult;

import oracle.iam.platform.kernel.vo.Orchestration;

import org.springframework.transaction.PlatformTransactionManager;

import org.springframework.transaction.TransactionDefinition;

import org.springframework.transaction.TransactionStatus;

import org.springframework.transaction.support.DefaultTransactionDefinition;

public class GenerateLoginID implements PreProcessHandler {

private ADFLogger eventLogger =

ADFLogger.createADFLogger(GenerateLoginID.class);

private PlatformTransactionManager txManager =

Platform.getPlatformTransactionManager();

public GenerateLoginID() {

super();

}

private String getParamaterValue(HashMap<String, Serializable> parameters,

String key) {

String value =

(parameters.get(key) instanceof ContextAware) ? (String)((ContextAware)parameters.get(key)).getObjectValue() :

(String)parameters.get(key);

return value;

}

/**

*

*This method is used to populate the user id

* @param l

* @param l1

* @param orchestration

* @return

*/

public EventResult execute(long processId, long eventId,

Orchestration orchestration) {

String methodName =

Thread.currentThread().getStackTrace()[1].getMethodName();

eventLogger.entering(methodName,

"params :[" + processId + "," + eventId + "]");

HashMap map = orchestration.getParameters();

String employeeType =

getParamaterValue(map, UserManagerConstants.AttributeName.EMPTYPE.getId());

eventLogger.info("[" + methodName + "] Employee Type " +

employeeType);

String userId = generateEMPID(employeeType);

System.out.println("[" + methodName + "] got user Id " + userId);

eventLogger.info("[" + methodName + "] got user Id " + userId);

map.put("User Login", userId);

//generate home directory here we are updating another attribute

map.put("Home Directory", "/home/" + userId);

EventResult result = new EventResult();

return result;

}

/**

*

* @param processId the processId

* @param eventId the event Id

* @param bulkOrchestration

* @return

*/

public BulkEventResult execute(long processId, long eventId,

BulkOrchestration bulkOrchestration) {

HashMap<String, Serializable>[] params =

bulkOrchestration.getBulkParameters();

for (int i = 0; i < params.length; i++) {

HashMap<String, Serializable> orchParam = params[i];

String employeeType =

getParamaterValue(orchParam, UserManagerConstants.AttributeName.EMPTYPE.getId());

String userId = generateEMPID(employeeType);

orchParam.put(UserManagerConstants.AttributeName.USER_LOGIN.getId(),

userId);

//custom attribute

orchParam.put("Home Directory", "/home/" + userId);

}

return new BulkEventResult();

}

public void compensate(long l, long l1,

AbstractGenericOrchestration abstractGenericOrchestration) {

}

public boolean cancel(long l, long l1,

AbstractGenericOrchestration abstractGenericOrchestration) {

return false;

}

public void initialize(HashMap<String, String> hashMap) {

}

private String generateEMPID(String employeeType) {

Long Id = null;

String methodName =

Thread.currentThread().getStackTrace()[1].getMethodName();

Connection con = null;

Statement st = null;

ResultSet rs = null;

TransactionStatus txStatus =

txManager.getTransaction(new DefaultTransactionDefinition(TransactionDefinition.PROPAGATION_REQUIRES_NEW));

boolean rollback = false;

try {

con = Platform.getOperationalDS().getConnection();

st = con.createStatement();

eventLogger.info("[" + methodName + "] Before Executing query");

if (employeeType.equalsIgnoreCase("Full-Time")) {

rs = st.executeQuery("select USERID_FT.nextval from dual");

} else if (employeeType.equalsIgnoreCase("Temp")) {

rs = st.executeQuery("select USERID_TMP.nextval from dual");

} else if (employeeType.equalsIgnoreCase("Consultant")) {

rs = st.executeQuery("select USERID_CONS.nextval from dual");

}

//other conditions here

if (rs.next()) {

Id = rs.getLong(1);

}

} catch (Exception e) {

rollback = true;

eventLogger.severe("[" + methodName +

"] Error occured in execution" +

e.getMessage());

} finally {

try {

if (rs != null) {

rs = null;

}

if (st != null) {

st = null;

}

if (con != null) {

con.close();

}

if (rollback)

txManager.rollback(txStatus);

else

txManager.commit(txStatus);

} catch (Exception e) {

eventLogger.severe("[" + methodName +

"] Error occured in execution" +

e.getMessage());

}

}

if (Id != null) {

return Id.toString();

} else

return null;

}

}

As this is pre process event handler all you have to do is place the new attribute value in the orchestration parameter map and it will be saved in the user profile. The generateEmpId method utilizes the transaction manager api.

The snippet for post process event is mentioned below.

package com.blogspot.ramannanda.oim.handlers;

import java.io.Serializable;

import java.util.HashMap;

import oracle.adf.share.logging.ADFLogger;

import oracle.iam.identity.usermgmt.api.UserManagerConstants;

import oracle.iam.platform.Platform;

import oracle.iam.platform.context.ContextAware;

import oracle.iam.platform.entitymgr.EntityManager;

import oracle.iam.platform.kernel.spi.PreProcessHandler;

import oracle.iam.platform.kernel.vo.AbstractGenericOrchestration;

import oracle.iam.platform.kernel.vo.BulkEventResult;

import oracle.iam.platform.kernel.vo.BulkOrchestration;

import oracle.iam.platform.kernel.vo.EventResult;

import oracle.iam.platform.kernel.vo.Orchestration;

public class GenerateEmailId implements PostProcessHandler{

private ADFLogger eventLogger=ADFLogger.createADFLogger(GenerateEmailId.class);

public GenerateEmailId() {

super();

}

/**

* Gets the parameter value from parameter map

* @param parameters

* @param key

* @return

*/

private String getParamaterValue(HashMap<String, Serializable> parameters, String key) {

String value = (parameters.get(key) instanceof ContextAware)

? (String) ((ContextAware) parameters.get(key)).getObjectValue()

: (String) parameters.get(key);

return value;

}

/**

*

* This method is used to populate the value for email id based on first name and last name

* @param processId

* @param eventId

* @param orchestration

* @return

*/

public EventResult execute(long processId, long eventId,

Orchestration orchestration) {

String methodName =

Thread.currentThread().getStackTrace()[1].getMethodName();

eventLogger.entering(methodName, "params :["+processId+","+eventId+"]");

EntityManager mgr=Platform.getService(EntityManager.class);

HashMap map = orchestration.getParameters();

String email=getParamaterValue(map, UserManagerConstants.AttributeName.EMAIL.getId());

if(email==null||email.isEmpty()){

String firstName=getParamaterValue(map, UserManagerConstants.AttributeName.FIRSTNAME.getId());

String lastName=getParamaterValue(map, UserManagerConstants.AttributeName.LASTNAME.getId());

String generatedEmail=generateEmail(firstName,lastName);

HashMap modifyMap=new HashMap();

modifyMap.put(UserManagerConstants.AttributeName.EMAIL.getId(),generatedEmail);

try {

mgr.modifyEntity(orchestration.getTarget().getType(), orchestration.getTarget().getEntityId(), modifyMap);

}catch (Exception e){

eventLogger.severe("[" + methodName +

"] Error occured in updating user" + e.getMessage());

}

}

// asynch events must return null

return null;

}

/**

* Generates email

* @param firstName

* @param lastName

* @return

*/

private String generateEmail(String firstName, String lastName) {

return null;

}

public BulkEventResult execute(long l, long l1,

BulkOrchestration bulkOrchestration) {

return null;

}

public void compensate(long l, long l1,

AbstractGenericOrchestration abstractGenericOrchestration) {

}

public boolean cancel(long l, long l1,

AbstractGenericOrchestration abstractGenericOrchestration) {

return false;

}

public void initialize(HashMap<String, String> hashMap) {

}

}

Note that post process events must return null as the event result as they are asynchronous event. The generate email is just a stub and is actually not implemented.

The event handler xml that you need to import into mds is mentioned below.

<?xml version="1.0" encoding="UTF-8"?> <eventhandlers xmlns="http://www.oracle.com/schema/oim/platform/kernel" xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance" xsi:schemaLocation="http://www.oracle.com/schema/oim/platform/kernel orchestration-handlers.xsd"> <action-handler class="com.blogspot.ramannanda.oim.handlers.GenerateLoginID" entity-type="User" operation="CREATE" name="GenerateLoginID" stage="preprocess" order="1000" sync="FALSE"/> <action-handler class="com.blogspot.ramannanda.oim.handlers.GenerateEmailId" entity-type="User" operation="CREATE" name="GenerateEmailId" stage="postprocess" order="1001" sync="FALSE"/> </eventhandlers>

Now for the logging part, in the snippets, i have used adflogger implementation. All you need to do to use it to add the logger with name com.blogspot to the oim server config, you could also add a log handler to place the logging output in a separate file.