You might be aware that there are different content encoding formats for encoding the text. Generally, it is safe to use UTF encoding, but at least you would expect that the websites would specify the encoding format in the response. Alas, you might find certain sites , which just send the content without specifying the content encoding that they are using. So to detect content encoding for such cases, you need a FSM (Finite State Machine). Initially, you just split the input into individual characters and then pass them onto different state machines, each of which uses a different encoding scheme. For each character that is passed to the state machine, it can either immediately identify a character that is unique to its encoding format, continue, or error out. At, the end of operation, you would generally expect a specific content encoding format or if insufficient input is available, return the default encoding format. You can read more about this here Universal Charset Detection.

Mozilla already has a library for this and there is a java port available for it. Mozilla’s library for this is universalchardet and the java port is juniversalchardet.

It’s really simple to use this as can be seen below:-

public static String detectCharset(InputStream is) throws IOException {

UniversalDetector detector = new UniversalDetector(null);

String encoding=null;

byte[] buf = new byte[1000];

// (2)

int nread;

while ((nread = is.read(buf)) > 0 && !detector.isDone()) {

detector.handleData(buf, 0, nread);

}

// (3)

detector.dataEnd();

// (4)

encoding = detector.getDetectedCharset();

if (encoding != null) {

logger.info("Detected encoding = " + encoding);

} else {

encoding = "ISO-8859-1";

logger.info("No encoding detected.");

}

// (5)

detector.reset();

return encoding;

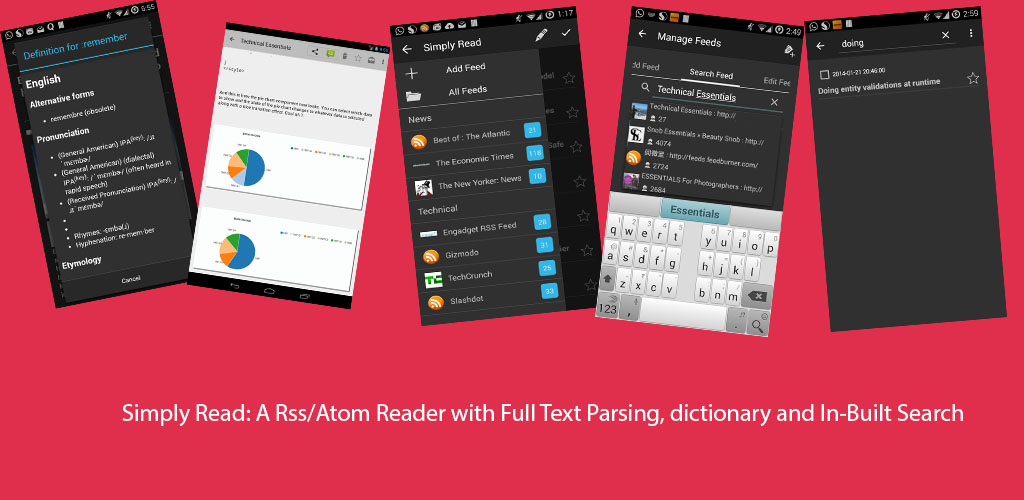

}This can be really useful, if you are planning to create a full text parser and the websites don’t return the content encoding that they are using. I used this while designing the full text parser for my application.

Hope this is useful for others out there.